Today I learned something interesting.

Old habits die hard and seismics is a pretty old business.

There are a couple of standards for storing seismic data in file formats. One of them is the so-called SEG-Y standard. It starts with information about the data set called header and then the actual seismic data is written. Newer standards like the so-callend Seismic Unix (SU) files, will not even convert some of these old headers.

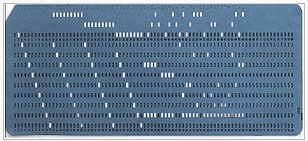

These EBCDIC-headers or “Extended Binary Coded Decimals Interchange Code”-headers go back to 1964 when computers still used punched cards. Nowadays we can use them without punched cards, just like any other character encoding using bits and bytes. And they are still in use. Essential data is stored in this pretty antique format and you should definitely have a look at it before doing any processing on seismic data. Old habits die hard.

EBCDIC punch card from 1964. Source

I remembered seeing these in data at my internship with Fugro, so I went on a search for some tool to read these headers. Since I rely upon the Seismic Un*x software bundle I figured they only have SU-specific software and like I said the EBCDIC are completely ignored. Therefore, I figured to just program it myself. In my experience applying your own code usually helps a lot with understanding something anyways. And it did.

I wrote on line of code to extract the EBCDIC headers with just Linux tools.

dd bs=3200 count=1 conv=ascii if=data.segy | sed ‘s/.\{80\}/&\n/g’ | sed ‘s/^.\{3\}/& /g’ > 004_EBCDIC.txt

You just have to replace data.segy with your dataset and 004_EBCDIC.txt with your desired output.

How does it work

First, we have to talk about the | operator.

In Linux we can just chain different programs to each other to streamline our workflow. The | is this connection to tell Linux to redirect the output to another program. Now let’s see about the different programs.

dd is the universal Unix converter. bs will define the so-called blocksize in bytes. This defines the chunks of data this program will process. count will tell the program how many chunks it is supposed to process. As you might have already guessed at this point, the segy header is 3200 bytes long. conv this the program to concert EBCDIC data to a format that can be read on every modern computer, called ascii. if just defines the input file. This is it, how you have the segy header in a readable format. However the formatting is horrible, so I piped the data into two sed commands to do the formatting for us. These use so-called regular expression to do text-manipulation. In these three fields I insert a line break after every 80 characters, insert a space after 3 character. The line break will significantly increase the readability. The space after 3 characters is optional and is just my personal musing for making it more readable.

What does it look like?

In the end we will get something like this.

C 1 CLIENT COMPAGNY CREW NO

C 2 LINE 00l035xd AREA MAP ID

C 3 REEL NO DAY-START OF REEL YEAR OBSERVER

C 4 INSTRUMENT: DELPH MODEL xx SERIAL NO

C 5 DATA TRACES/RECORD 1 AUXILIARY TRACES/RECORD 0 CDP FOLD

C 6 SAMPLE INTERVAL 62 SAMPLES/TRACE 4800 BITS/IN 16 BYTES/SAMPLE 2

C 7 RECORDING FORMAT FORMAT THIS REEL MEASUREMENT SYSTEM

C 8 SAMPLE CODE: FIXED PT C 9 GAIN TYPE: FIXED

C10 FILTERS: ALIAS 8000HZ NOTCH HZ BAND – HZ SLOPE – DB/OCT

C11 SOURCE: TYPE NUMBER/POINT POINT INTERVAL

C12 PATTERN: LENGTH WIDTH

C13 SWEEP: START HZ END HZ LENGHT MS CHANNEL NO TYPE

C14 TAPER: START LENGHT MS END LENGHT MS TYPE

C15 SPREAD: OFFSET MAX DISTANCE GROUP INTERVAL

C16 GEOPHONES: PER GROUP SPACING FREQUENCY MFG MODEL

C17 PATTERN: LENGTH WIDTH

C18 TRACES SORTED BY: RECORD

C19 AMPLITUDE RECOVERY: NONE

C20 MAP PROJECTION ZONE ID COORDINATE UNITS

C21 PROCESSING:

C22 PROCESSING:

C23

C24

C25

C26

C27

C28

C29

C30

C31

C32

C33

C34

C35

C36

C37

C38

C39

C40 END EBCDIC

This is a USGS EBCDIC header taken from the U.S. Geological Survey Coastal and Marine Geoscience Data System. These headers can look very different depending on who recorded this data. However, if you happen to see the C01 to C40, you know you’ve done something right. This is a relict from the old punch cards. Take a look at C21 and C22 in this case, usually you can find some processing history in these headers, this will help you understand your data.

Feel free to test and use the code to your liking, maybe leave a comment, if it helped in any way.

Jesper Dramsch

Latest posts by Jesper Dramsch (see all)

- Juneteenth 2020 - 2020-06-19

- All About Dashboards – Friday Faves - 2020-05-22

- Keeping Busy – Friday Faves - 2020-04-24

Pingback: SEG-Y sucks! Or does it? — Way of the Geophysicist