Last month EAGE and PESGB organized the first machine learning workshop in geoscience in Europe. Clearly, I had every intention of going. And obviously, I met many of my favourite co-conspirators there, when I did.

The workshop was divided between a day of keynotes and a day of technical talks. The keynotes accompanied the PETEX conference’s last day. Whereas the technical day was in an adjacent room during the tear-down of the conference. What sounds like a noisy terror, was actually a pretty nice room.

The keynotes are what you would expect.

The Keynotes

Yves le Stunff provided some insights about the collaboration of Total with Google and some early prototypes.

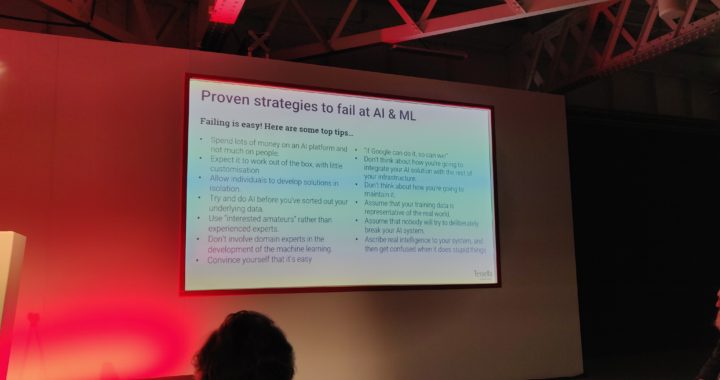

Matt Roberts from the AI consultancy Tessella was the cross-industry collaborator. He talked about the impact of AI in medicine and pharma. He highlighted the main drivers of AI and algorithmic development in other industries and how oil and gas specifically might need to adapt its strategies.

Steve Freeman was the keynote speaker for Schlumberger. While the Schlumberger-blue presentation of darkness was familiar, the content was refreshing. Steve advocated that you cannot expect engineers to come up with innovative AI products in 3 months or even “on the side”.

Matt Hall from Agile Scientific and Jo Bagguley from the Oil and Gas Authority in the UK gave a talk of their recent joint venture to bring Python to UK geoscientists and organize two hackathons. Which from my perspective seems like a success.

Eirik Larsen from Earth Science Analytics talked about their service that sells an interface between AWS and Scikit-Learn for the not so programming inclined audience.

Then there was a panel discussion. The worst kind of discussion. But everyone was able to ask “if AI will steal our job” and everyone was able to answer “Noooo, not if you’re willing to adapt.” Some speakers were more willing to have been on that panel than others.

There was a coffee break without coffee, but I’m trying to forget that traumatic event.

The Technical Session

First things first, there was coffee served. Second things second, of course, I will plug my stuff, which this time around was a poster and a talk on two very different topics:

Dramsch, Jesper Soeren; Lüthje, Mikael (2018): Information Theory Considerations In Patch-Based Training Of Deep Neural Networks On Seismic Time-Series. figshare. Poster.

A poster how patch-based training introduces low-frequency noise into the learnt distribution. Batch-normalization may help, but probably will not be enough. I suggest complex-valued convolutions.

Dramsch, Jesper Soeren; Amour, Frédéric; Lüthje, Mikael (2018): Gaussian Mixture Models for Robust Unsupervised Scanning-Electron Microscopy Image Segmentation of North Sea Chalk. figshare. Presentation.

A talk on automatic segmentation of scanning electron microscopy images of clean chalk. Outside of my normal domain expertise, but definitely shows what comes out of collaborating with interesting people on interesting topics. Personally, I love SEM and microscopy of foraminifera. I have worked with SEM imaging in my school time and taken micropaleontology in university. If you are looking for untapped potential: O’zapft is.

It was a pleasure to see that this workshop was a good mix of topics. The methods were mostly neural networks, in fact, Henry Blondelle’s talk on document classification, the Schlumberger talk on microseismic signal identification, and my talk used non-NN methods.

The prevalent topics of inversion and automatic seismic interpretation were covered. Some claimed to do FWI in 1D. Which is nice, considering the problem has been approached by the original inverter Tarantola in 1984 – 1991. Brendon Hall reproduced the paper.

Geophysical Insights provided some more input, how they use Self-Organizing Maps for automatic interpretation. It became apparent that it is very important to choose the right seismic attributes as input. The talk was quite insightful as to the applications they have tested it on though.

Nishitsuji held an interesting talk, using active learning to increase training data in well and seismic data classification, I was too nervous before my talk to fully remember though.

Personally, I most enjoyed the “Latest Developments” section. Production forecasting with stacked LSTMs, this was something I had to learn about. Stacked RNN networks weren’t on my radar yet and Loh et al. were very accommodating to my questions in the discussion.

Baardman from Aramco presented work on deblending with convolutional neural networks, which showed some convincing results. I’d love to see a simultaneous / siamese NN for seismic deblending. That should have some nice properties.

Lukas Mosser talked about Stochastic Seismic Inversion, which takes the generator network from a GAN as the implicit model prior in a Bayesian framework. The work he does is fantastic to me. It’s straightforward to use a CNN trained on images and apply it to seismic images. Understanding GANs enough to take the generator and mix it into a Bayesian framework is something you have to breathe GANs for. The preprint is openly available.

Concluding Remarks

This was a very nice workshop and I commend the technical committee for the mix of topics. Special thanks to Duncan Irving for being a driving force on ML in geoscience and the communication thereof. Everyone was very accommodating and I was done a bit early with my talk, where the audience was so nice to start a very good discussion with me and spend the time until the next talk.

All in all, I went away from these two days, feeling like this was time well spent. I saw interesting developments both in established ideas and on the bleeding edge of ML in geoscience applications. And I got to present my work which is always fun.

Please find the proceedings here: http://earthdoc.eage.org/publication/result?ediId=579

Jesper Dramsch

Latest posts by Jesper Dramsch (see all)

- Juneteenth 2020 - 2020-06-19

- All About Dashboards – Friday Faves - 2020-05-22

- Keeping Busy – Friday Faves - 2020-04-24