It’s often hard to get into new fields like machine learning or data science. One reason for it being hard, lies in the fact that we don’t even know what do not know.

This list is a compilation of sources that will help you, dear reader, to fill the gaps towards using data science and machine learning. Here, we will not define what these things are, or why exactly you need them. They are more geared towards something to come back to, when you find gaps in your education, while delving into machine learning.

Many readers will be students or come from underprivileged backgrounds. Here I have tried my best to curate free resources, or resources that in my opinion are worth the investment. You will see that some links are monetized, but these links were added after I have curated the content, you will not see any funny business here.

However, although they are arranged in the conventional “start here, then move on” way, as this is the easiest way to structure text, I believe this is not the best way to learn. I would suggest to you, to dive in deep and coming back to this resource, once you find roadblocks. You will find the fast.ai course is very interesting and will throw you right into the fascinating world of deep learning. Sometimes you will realize that maybe your math or your python skills are lacking, then this can be used as a central resource to find a way to fill the gap.

Or read it back to back, I’m not your mother after all.

Mathematics

Mathematics lies at the core of machine learning. The main components that you have to understand are linear algebra and calculus. Linear algebra is about understanding how neural networks themselves work and calculus is all about training neural networks. If you had troubles in school with math, like me, you may want to start at getting the basics right. Having a good foundation will make your life easier in every subsequent step.

Precalculus and General applications in Math

Khan Academy is fantastic at replacing the bad math education you endured in school. If you find you are out of your depth with the videos below, start where school left you with Khan Academy then use both 3Blue 1Brown and Khan Academy to learn the math necessary for modern machine learning.

Linear Algebra

This is the best introduction to vectors and matrix calculations I have ever seen.

Calculus

Just as well this is the best overview of calculus I have ever seen.

Coding Focused Linear Algebra

This course focuses on translating math to the text editor. It’s good to understand how you can actually put vector logic into your computer. This course has written material and video content, as touching the code yourself is always the best way to learn.

Fast AI course on Computation Linear Algebra

Optimization

Numerical optimization is a very general skill, but it lies at the basis of every machine learning application. Be it support vector machines or neural networks, they all minimize an objective function. These functions are extremely important if you want to start doing some work in machine learning. There is a nice introductory video series available. Additionally, you can check out the other two books detailing all of optimization and focusing on convex problems.

Metaheuristics essentials book

Convex optimization book

Programming in Python

I am a Python fan. It’s an extremely readable and high-level programming language. Python itself is very slow, but it can utilize almost any other programming language. When we use the deep learning framework Tensorflow in Python, it is just an abstraction of the real C++ Tensorflow that is extremely optimized and fast. Learning at least some Python will take you a long way in data science. Data has to be cleaned, transformed and feed into the hand-crafted algorithms. Learn the basics in Python and it will take you a long way. Pandas is a Python library that you will find very helpful, when working with data, therefore Pandas is included in this list.

- Codecademy Python interactive course

- Automate the boring stuff – Educational Python book (Amazon)

- Introduction to Pandas

Statistics

Machine learning is just statistics at scale. Knowing statistical basics is very important to not make a fool of ourselves when throwing a learning machine at data. There is a free classic book and several free courses. I like the following to give specific insights into descriptive and inferential statistics as well as a general basic stats course.

- Elements of Statistical learning book (free) or on Amazon

- Descriptive Statistics – Udacity course

- Inferential Statistics – Udacity course

- Basic Statistics – Coursera – University of Amsterdam

Design Thinking

Design thinking may be the odd one out. However, this thought process has helped me immensely in improving both my code and my interaction with colleagues. Ego is the Enemy is a book about stoic philosophy that isn’t directly Design thinking, but a good reminder to keep ourselves in check, even when we are doing cutting edge research.

- Intro to Design thinking – Microsoft course

- Intro course into Design thinking from the d.school itself

- Ego is the Enemy – Ryan Holiday

- Empathy: an essential skill in Software Development

Reproducibility

Making your research reproducible is very important. However, just sharing your code often isn’t enough to make it easily reproducible.

Let’s be honest here, we scientists are mostly hacking together our code and sometimes we don’t know ourselves why it’s working. The following resources will help you create documentation and bundle your code together with the software to run and share. Jupyter is fantastic for exploring data with code. Docker is fantastic at bundling your code and the necessary software for it to be run by peers.

- Jupyter Notebook course

- Introduction to Virtual Machines, Containers and specifically Docker

- Docker introduction

Data Science

Data Science is the category where machine learning fits into. Analyzing data in various forms is sort of an art and takes practice. Kaggle stands uncommented, as it’s a resource you have to know in this field. And of course, following the Way of the Geophysicist.

- Skillshare — Data Science with Python

- Kaggle.com

- Intro to Data Analysis — Udacity course

- Tableau Software — Data Analysis Out of the Box Software

- Spotfire Software — Data Analysis Out of the Box Software

Machine Learning

There is classic machine learning that includes methods such as logistic regression and support vector machines. While deep learning is very shiny, often times a simpler model is preferable, learn these first.

- Fantastic visual introduction to Decision Trees

- Andrew Ng course on Coursera

- Scikit-Learn Tutorials

- CS231N Stanford course – Machine Learning Examples

Deep Learning

All the rage at the moment. Three free courses, of which my favourite are the Fast.AI courses. The deep learning book is free and fantastic as well.

- Fast AI course Part 1

- Fast AI course Part 2

- Deep Learning Book (free) by Goodfellow et al. or on Amazon

- CS231 Stanford course – Deep Learning track

Reinforcement Learning

Reinforcement learning is even more the rage than deep learning. It relies on a metaphorical “agent”, which is an AI system that learns to make a decision. This agent is released into an environment, where it learns by itself, maximizing some sort of reward.

- Two introductory articles: Karpathy and Distill.pub

- Reinforcement Learning Book (free) by Sutton or on Amazon

- UCL Reinforcement Learning course by David Silver

- Deep Reinforcement Learning from Berkeley

Cloud Computing

Cloud Computing and HPC on clusters is at the heart of deep learning. Many do not have a desktop PC anymore, therefore flocking to Google Cloud or AWS is natural. On Google Colab you will get a free GPU to use, but the setup can be tricky for all of them. These resources try to get you on your way.

- Google Colab Free GPU

- CS231n Amazon Web Services Setup

- Coursera Google Cloud Setup

- Google Cloud Getting Started

- Paperspace Cloud computing platform

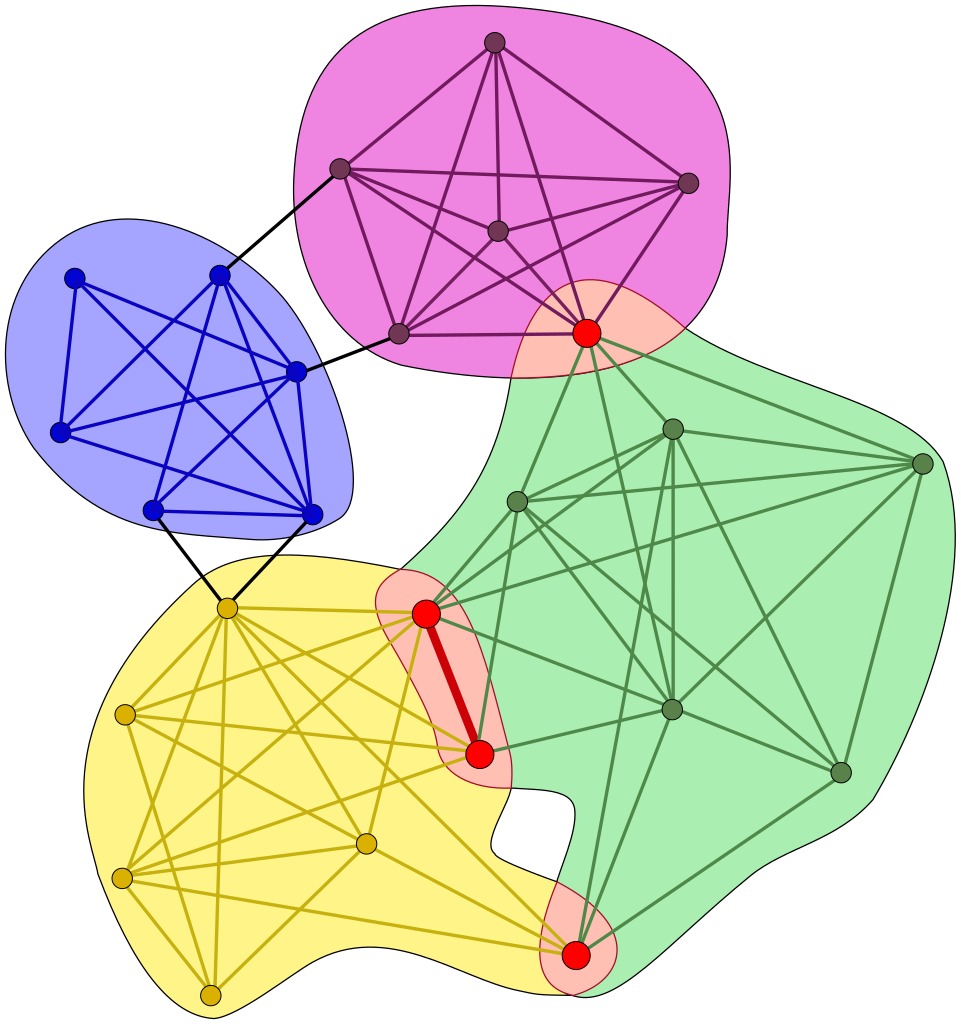

Graph Theory

Once you have these concepts down, you can dive off the deep end. Neural networks can mathematically be described as acyclic directed graphs. In the case of some recurrent neural networks, the topology is actually a cyclic directed graph. Additionally, a new type of network that is promised to be changing everything about neural nets are capsule networks. These are only possible due to dynamic routing and stray from the basic linear concepts that come with standard convolutional neural networks. To understand these concepts, graph theory comes in very handy.

- Intro to Graph Conv Nets

- Coursera Course on Algorithms on Graphs

- Python Package NetworkX for graph calculations

- Gephi – Open source Graph visualization with great open online tutorials

- Johns Hopkins Uni script on adaptive routing essential for caps nets and helpful for graph convolutional nets.

Useful Tips and Tricks

These are links that don’t quite fit in other categories but are concepts and resources that are important.

- ML Cheatsheet – Visual examples of every day (and not so every day) ML concepts

- Neural Network Zoo – Most common architectures

- Nash Equilibrium on Khan Academy – concept from game theory that is important for generative adversarial networks

Stay Up to Date

A field that evolves at this kind of speed is hard to keep up with. Here are three resources I use to learn about the newest flavours of deep learning.