Many a times I have been cursing, when I got a new seismic file. Be it a 2D line, 3D cubes or pre-stack data, the standard is seldomly adhered to by most companies.

The Standard

SEGY as defined by the standard is now in revision 2. Most standards will likely be rev1 in these days. There is an inherent inertia in changing existing data types. Data managers would call me crazy right here by even mentioning the idea.

Headers

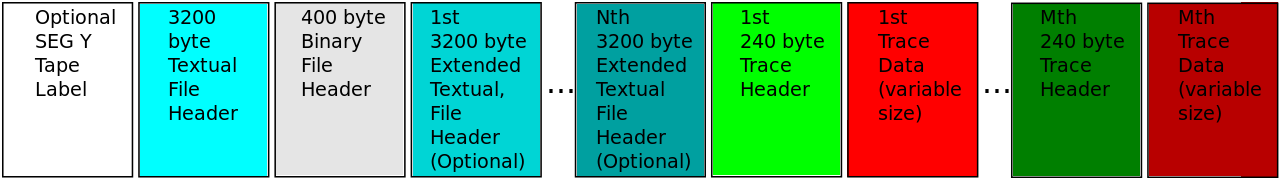

I have written about the peculiar EBCDIC header before. It’s a 40 line, 80 character header that is directly taken from punch cards and mirrored in a digital setting. It contains free text that is supposed to tell you about the acquisition and processing of the data. Sometimes about peculiarities of the dataset, such as deviations from the official standard. This header exists once per dataset.

The binary header also exists once per data set. The header is defined to be a fixed length. Within this length it is segmented into compartments. These compartments can house certain types of numbers. The header itself is not readable, unless you have the SEG-Y definition to guide you along for two reasons. Reason one being, that the compartments are not clear from the data itself. The binary header on disk looks like a series of zeros and ones. One would have to go guessing if a sequence is certain type of number or another. Even if someone finds the correct constellation of compartments, they see pure numbers. A SEG-Y file binary header does not contain any information about the meaning of a headerword, only the value. For example, the “line number” header word might contain the numer 42 but does not have any means or space to tell the user that 42 is in fact the line number.

Each data trace in the SEG-Y file has its own header and this is usually where every company likes to mess with the standard. And this is usually where reading SEG-Y data quickly fails. Each trace header once again has a fixed length and is placed right before the data trace. It contains information, where the trace was recorded, length, sampling, bin location. Similar to the binary header these headers are segmented into compartments that are once again not describing what number they contain. That means when you try to load data, where the headers were modified from the standard, a trace with number 1 might be read as a random number such as 10025533 and trace two has another random value such as -32034456. This would be a rather unfortunate mistake.

Making sure the modified header definitions are present in the data loader is essential.

The Shape of SEGY

Every trace header describes the various attributes a trace has, such as location or number within the acquisition sequence. While it’s often written in order of the sequence line, no assumptions have to be made about the actual order. This is very nice for archiving and transfering data. If you see the abominations companies do to trace headers, you can imagine the “improvements” on the “standard shape” of data would be made.

Unfortunately, this means each time series of a trace is saved separately. In many computer vision tasks, such as looking at 3D seismic in Petrel or deep learning, the traces have to be arranged in a geometry that does not take each time series separately but as a coherent spatial data array of adjacent time series. This is nothing SEG-Y is directly capable of. The data has to be transfered to a suitable format.

The case for SEG-Y

Most positively to note about SEG-Y is that it is written to disk without encoding the data. It is and will always be directly readable by a computer, without knowing the specific encoding of the data. Well, mostly. Unfortunately, computer science itself has brought two quirks to this universality. Floating point numbers, which is nerdspeak for a decimal number, are not created equally. First problem, that you can read the string of zeros and ones that are written to disk, can be read from the right or the left, which is called endianess. The next problem is going meta in a blog-post about standards. There are two standards for floating point numbers, you will see them as IEEE and IBM. So, in theory it works out of the box. In practice you need some software checks or user supplied information about floating point standard and endianess.

It’s easy to convert SEG-Y data to a format that is more suitable for the application or task you try to use. So maybe instead of defining new standards, it’s time to build a tool to take SEG-Y and transform it to a format more suited for the task

Jesper Dramsch

Latest posts by Jesper Dramsch (see all)

- Juneteenth 2020 - 2020-06-19

- All About Dashboards – Friday Faves - 2020-05-22

- Keeping Busy – Friday Faves - 2020-04-24